One very bad Apple

Why is Apple's commitment to privacy going down the drain?

Art: Apple Gatherers, Camille Pissaro, 1891

My fifth grade teacher, Mr. Stains, had a big energy about him. He imparted two American cultural norms upon me. First, he taught me about the religion of American football. (If you’re also interested in learning more, I recommend Billy Lynn’s Long Halftime Walk.) And second, he taught me about bumper stickers. He loved collecting them, and had a bunch tacked up on his bulletin board.

One of his favorites was, “Just because you’re paranoid, doesn’t mean they’re not out to get you, because they are.” The phrase has lately turned from a funny school memory, into a jaded way of viewing a world that’s stacked against the end-consumer. Which is probably what Joseph Heller intended when he originally wrote Catch-22.

Bugs don’t get through closed windows

Recently, I’ve been thinking about this phrase in the context of Apple’s “commitment to privacy.”

Apple has always made a marketing pitch that it was the most secure platform. This all started with Steve Jobs, who was famously obsessive about having complete control over every aspect of the hardware and software.

Apple started this process early on.

By late 2013, when Apple released its iOS 7 system, the company was encrypting by default all third-party data stored on customers’ phones.

Since Apple is closed, it’s harder for hackers to get in. Security also means that Apple itself can’t reverse-engineer the code to see underlying messages.

The Apple of my FBI

This theory was put to a horrific stress test in 2015, when two shooters killed 15 people and injured 22 in San Bernardino, California. The shooters were killed in a shoot-out, and the police recovered three phones from the crime scene. One of them, the shooter’s work phone, was still in-tact, and locked with a numeric passcode. In the aftermath, the shooting was declared act of terrorism. As a result, the federal government wanted to get involved.

In 2016, the FBI asked Apple to unlock the phone. Apple did not want to unlock the phone.

The iPhone was locked with a four-digit passcode that the FBI had been unable to crack. The FBI wanted Apple to create a special version of iOS that would accept an unlimited combination of passwords electronically, until the right one was found. The new iOS could be side-loaded onto the iPhone, leaving the data intact.

But Apple had refused. Cook and his team were convinced that a new unlocked version of iOS would be very, very dangerous.

After thinking on the issue with a small group, at 4:30 in the morning, Tim Apple released a statement that talked about the vital need for encryption, and the threat against data security that the FBI’s request had resulted in.

Specifically, the FBI wants us to make a new version of the iPhone operating system, circumventing several important security features, and install it on an iPhone recovered during the investigation. In the wrong hands, this software — which does not exist today — would have the potential to unlock any iPhone in someone’s physical possession.

The FBI may use different words to describe this tool, but make no mistake: Building a version of iOS that bypasses security in this way would undeniably create a backdoor. And while the government may argue that its use would be limited to this case, there is no way to guarantee such control.

The Apple of my iMessage

This was perhaps the first time I heard any large company CEO talking about privacy and actually putting his money where his mouth was. I was super impressed. That year, I switched over to the iPhone.

With everything I read after that, I became reassured, both by Apple and by third-party commentators, that Apple had no interest in anything except user privacy, because they didn’t need to sell data.

The truth is we could make a ton of money if we monetized our customer, if our customer was our product," Cook said. "We’ve elected not to do that.""Privacy to us is a human right. It's a civil liberty, and something that is unique to America. This is like freedom of speech and freedom of the press," Cook said. "Privacy is right up there with that for us.

And if I didn’t believe Tim, there were his privacy czars.

Indeed, any collection of Apple customer data requires sign-off from a committee of three “privacy czars” and a top executive, according to four former employees who worked on a variety of products that went through privacy vetting.

Approval is anything but automatic: products including the Siri voice-command feature and the recently scaled-back iAd advertising network were restricted over privacy concerns, these people said.

And if I didn’t believe Tim and the privacy czars, in 2016 Apple’s machine learning teams started talking publicly about the differential privacy they were working on.

Differential privacy is the practice of adding enough fake data, or noise, to a given machine learning algorithm that you can no longer tie back data to individuals, but it still allows ML predictions to work. Combined with federated privacy, where machine learning models are trained and run directly on the mobile device without directly connecting to Apple’s server, differential privacy results in really good, strong privacy.

Differential privacy [2] provides a mathematically rigorous definition of privacy and is one of the strongest guarantees of privacy available. It is rooted in the idea that carefully calibrated noise can mask a user’s data. When many people submit data, the noise that has been added averages out and meaningful information emerges.

But trusting a company is one thing. Trusting an independent person that you yourself trust is another. And Maciej Cegłowski said he trusted Apple.

Maciej runs Pinboard. He’s written essays that I’ve linked to so many times that I should be giving him royalties. Some of my favorites include Haunted by Data, the Website Obesity Crisis, and Build a Better Monster, which I saw him deliver live in Philly. Right now he’s in Hong Kong doing some of the best reporting on the protests, in spite of the fact that he is not a journalist.

In 2017, Macjiei started working with political campaigns and journalists on securing their devices. He’s since advocated many times for people to use iPhones.

So, if Tim, the developers, the data scientists, the journalists, and Maciej were all telling me that I should use an Apple phone, I was going to use an Apple phone.

Doubts

And things were great for a year or so. But then, the paranoia started creeping in.

First, cryptographers were not really happy with the way iMessages were encrypted. In 2016, they wrote a paper about ways you could exploit iMessage.

In this paper, we conduct a thorough analysis of iMessage to determine the security of the protocol against a variety of attacks. Our analysis shows that iMessage has significant vulnerabilities that can be exploited by a sophisticated attacker. The practical implication of these attacks is that any party who gains access to iMessage ciphertexts may potentially decrypt them remotely and after the fact.

The researchers, including Matthew Green, went on to say,

“Our main recommendation is that Apple should replace the entirety of iMessage with a messaging system that has been properly designed and formally verified. "

That sounds serious? But I’m not a cryptography expert. So I let that one slide.

Then, there were reports that Apple contractors were listening to Siri.

According to that contractor, Siri interactions are sent to workers, who listen to the recording and are asked to grade it for a variety of factors, like whether the request was intentional or a false positive that accidentally triggered Siri, or if the response was helpful.

But I have never, ever turned on Siri, and the reports said that Apple anonymized the commands. I let it slide.

Then, there was the story that you still had to opt out of ad tracking on your iPhone because third-party apps were collecting stuff about you.

You might assume you can count on Apple to sweat all the privacy details. After all, it touted in a recent ad, “What happens on your iPhone stays on your iPhone.” My investigation suggests otherwise.

iPhone apps I discovered tracking me by passing information to third parties — just while I was asleep — include Microsoft OneDrive, Intuit’s Mint, Nike, Spotify, The Washington Post and IBM’s The Weather Channel. One app, the crime-alert service Citizen, shared personally identifiable information in violation of its published privacy policy.

Then, there were reports that Apple was running part of iCloud on AWS, a move that is fraught with its own security considerations and implications, not the least of which is that Apple is trusting a significant part of its infrastructure to a competitor.

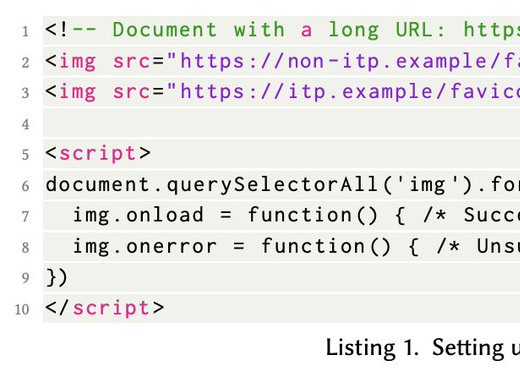

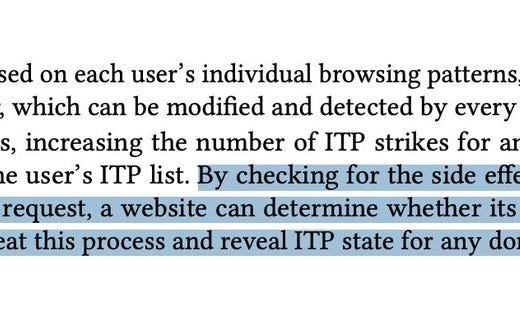

Then, there was the paper put out (by Google, but still) about how Apple’s intelligent tracking protection on Safari leaks data.

Then, the last straw this January, when it was revealed that Apple was not encrypting iCloud backups, at the request of the FBI. And, Apple is again facing pressure from as the FBI is again asking it to hand over phones, related to another shooting that happened this January in Florida.

And, finally, whatever happened to the 5C iPhone that Tim Apple so valiantly fought the FBI for? The government was able to backdoor into it anyway, without Apple’s help.

Why, Apple, why?

In theory, we should all be very, very mad at Apple, who is playing in a big game of cross-hatch.

At the same time that it is giving over unencrypted data to governments, has a big presence in China by doing what the Chinese government asks it to do, and allowing targeted advertising, it’s doing an enormous pro-privacy advertising campaign.

Just look at this:

And look at this ad that it put up at CES in Las Vegas this year, a trade show which it hasn’t even attended as a vendor in years.

What’s up

All we have to do is look at the workhorse models of Normcorian analysis: Apple’s history, its 10-K forms, and systems theory.

First, I think it’s important to differentiate two things: the privacy of Apple systems themselves (iCloud, iMessage, the phone), and the third-party app ecosystem on which the company also relies. The first is entirely Apple’s responsibility.

Apple started out with the Jobsian premise of being closed ,because being closed means you can control every aspect. Its corporate structure was basically just product decisions coming down from on high.

But when Tim Cook took over the small, sleek systems that Steve had such a tight grip over, exploded in size. For example, Apple’s employee numbers have increased exponentially, from 20k to over 100k, since 2008.

This in and of itself makes things hard to manage. There are 800 people working on the new iPhone camera alone. Imagine how many moving pieces that is. Now, add in iMessage. Add in the browser. Look! This diagram shows how many services are involved, and this is just for the AUDIO part of the phone. (Remember how complicated Ring is? Now multiply that by a million.)

So, how many people are working on the iOS ecosystem? Anywhere upwards of 5,000 (based on a super loose Google search.) The phone itself and the OS have also grown exponentially more complicated.

And the services Apple is offering are also a lot more diverse. It’s not just hardware anymore. It’s also software, streaming services, and peripherals like Watch.

However, Apple is facing immense competition.

The markets for the Company’s products and services are highly competitive and the Company is confronted by aggressive competition in all areas of its business.

This means the company has to move quickly and differentiate. It’s already gotten planned obsolescence down to a science for its current phone owners:

the Company must continually introduce new products, services and technologies, enhance existing products and services, effectively stimulate customer demand for new and upgraded products and services

So it has to lure new phone owners. And what better way to do that than to play up the privacy angle, something that’s been a growing concern for consumers? In the GDPR and CCPA era, privacy is a competitive advantage.

Given all of this, it’s impossible to monitor every single security threat. So when Apple goofs on a privacy thing, I assume that half of it was intentional, and half was because a group of project managers simply can’t oversee all the ways that a privacy setting can go wrong on a phone connected to the internet.

As Bruce Schneier said, security is really hard. And this is not even going into all the possible attack vectors that arise when a phone actively connects to the internet, including GPS tracking, which affects every phone.

Second, when the app store launched in 2008, there were 500 apps. There are now over 2 million, and each of them could be tracking user data in any number of given ways. Apple has to tread a fine line. If it locks everything down at the phone level, apps would get angry and leave the platform. If it doesn’t, people get angry. Like YouTube, Apple has to tread a fine line between being permissive enough and being cancelled.

The truth of the matter is that any modern cell phone is like a very data-rich, leaky sieve, constantly giving out information about you in any number of ways, to any number of parties, without incentives for companies to reign it in. Which is why Edward Snowden had lawyers put their phones in the fridge.

Speaking of the NSA, Apple’s finally gotten so big that many governmental agencies are listening and making demands to get access to phones. There is the FBI. Then there is China, for whom Apple has already made a ton of concessions. And now, Apple now has a Russia problem, too. Russia recently mandated that all cell phones pre-load software that tells the Russian government what’s up.

In November 2019, Russian parliament passed what’s become known as the “law against Apple.” The legislation will require all smartphone devices to preload a host of applications that may provide the Russian government with a glut of information about its citizens, including their location, finances, and private communications.

So, here we are, in 2020, with Apple in a bit of a pickle. It’s becoming so big that it’s not prioritizing security. At the same time, it needs to advertise privacy as a key differentiator as consumer tastes change. And, at the same time, it’s about to get canclled by the FBI, China, and Russia.

And while it’s thinking over all of these things, it’s royally screwing over the consumer who came in search of a respite from being tracked. And what is the consumer doing? Well, this one in particular has limited ad tracking, stopped iCloud backups of messages, and has resumed her all-encompassing paranoia.

What I’m reading lately:

I’m on Python Bytes this week!

Big if True:

The Newsletter:

This newsletter is about a different angle on tech news. It goes out once a week to free subscribers, and once more to paid subscribers. If you like it, forward it!

The Author:

I’m a data scientist. Most of my free time is spent wrangling a preschooler and a baby, reading, and writing bad tweets. Find out more here or follow me on Twitter.